An entry no one had heard of a week ago now sits at #1 on Artificial Analysis’ Video Arena, beating Seedance 2.0, Kling 3.0, and Sora 2 Pro in blind user voting.

LOS ANGELES, CA, April 09, 2026 /24-7PressRelease/ — On April 8, 2026, the AI side of X was overrun by a very happy horse.

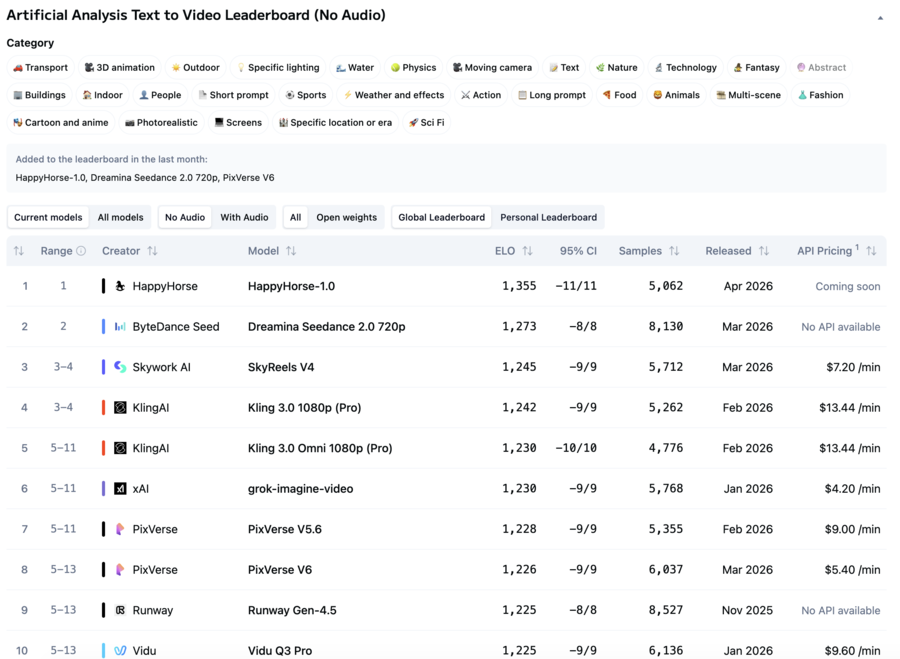

In the latest snapshot of Artificial Analysis’ Video Arena, a model called Happy Horse 1.0 has, by current public rankings, broken into the top of every leaderboard that matters — and on the text-to-video (no audio) board, it has taken the #1 position outright.

According to the live rankings, Happy Horse 1.0 is sitting at Elo 1,355 on text-to-video (no audio), 82 points ahead of the previous leader, ByteDance’s Dreamina Seedance 2.0 720p at 1,273. SkyReels V4 (1,245), Kling 3.0 1080p Pro (1,242), and Kling 3.0 Omni Pro (1,230) round out the top five. The result has been built on over 5,000 blind user matchups, and Artificial Analysis has already flagged Happy Horse 1.0 as one of the handful of new models added to the leaderboard in the past month.

On the image-to-video (no audio) track, industry observers have reported Happy Horse 1.0 posting an even larger lead. And on the tougher with-audio track — where models are judged on whether image and sound actually land together — the same model is currently tracking at global #2, behind only Seedance 2.0.

Three tracks. Two outright victories. One close second against the reigning champion. All from a model that, a week ago, nobody had heard of.

Why This “#1” Is Real: Inside the Blind Testing Methodology

What makes this moment hard to dismiss as a marketing stunt is the benchmark itself. The Artificial Analysis Video Arena doesn’t rank models on self-reported scores or single-demo flex pieces. It runs on Elo ratings derived from thousands of real users doing blind, side-by-side comparisons of two generated clips — without knowing which model produced which. That methodology is the closest thing the video-generation world has to LMArena, and it’s the reason a lead of this size carries more weight than any number a lab quotes in its own blog post.

The statistical picture is unusually clean. Happy Horse 1.0’s Elo of 1,355 carries a 95% confidence interval of ±11 points, meaning even at the pessimistic end of its range (1,344) it sits comfortably above Seedance 2.0’s optimistic end (1,281). On preference-based arenas, a separation of this magnitude — persisting across thousands of matchups and holding outside the confidence bands of the next-ranked model — is generally treated as meaningful signal rather than statistical noise.

That said, rankings on preference-based arenas are dynamic and can shift as new votes and new model entries arrive. What is clear as of April 8 is this: on current blind user preference, Happy Horse 1.0 is the model real users are picking most often in head-to-head comparisons on the Arena’s most-watched text-to-video and image-to-video tracks. And it has been picking up roughly as many daily samples as the frontier models it is beating.

It’s also worth noting, in the interest of completeness, that Artificial Analysis lists Happy Horse 1.0’s API status as “Coming soon” — the model is visible and votable on the Arena, but not yet broadly available for public production use, which is not unusual for models in their early Arena window.

The Detective Story: Who Built Happy Horse 1.0?

The moment Happy Horse 1.0 showed up under an unknown banner, English-speaking AI Twitter went into full Sherlock mode. Was it a stealth Wan 2.7 from Alibaba’s Tongyi Lab? A covert Seedance variant from ByteDance? A research project from some unannounced lab in Asia? Brent Lynch, venturetwins, and even Grok were all asked to weigh in. Nobody had a confirmed answer.

In the days since, the most widely discussed theory — originating in Chinese-language tech media and now circulating in English-speaking AI circles — points to a team that, if correct, would explain almost every clue. Multiple Chinese-language reports have linked Happy Horse 1.0 to a new team reportedly led by Zhang Di, the former vice president at Kuaishou and technical lead behind Kling — one of the very models currently sitting near the top of the same leaderboard. Public disclosures indicate Zhang joined Alibaba in late 2025 to run the Future Life Lab (未来生活实验室) inside the Taotian Group.

The Future Life Lab is, by Alibaba’s own positioning, a serious bet. It sits inside Taotian’s core e-commerce algorithms organization — reportedly the largest applied computer-vision workload in China — and was set up to combine top research talent with production-scale compute to push on large models, multimodal systems, and AI-native applications. Despite being only a little over a year old, the lab has reportedly published more than ten papers at top-tier conferences.

If the attribution holds, the narrative is almost too clean: the person who built Kling left his old company, took over a new lab at Alibaba, and his first public output is a model that beats his previous work on the same blind benchmark. Neither Alibaba nor anyone publicly associated with Happy Horse has officially confirmed the attribution as of publication. For now, it remains the most credible theory — not a verified fact.

The Post-Sora Era, in One Data Point

For eighteen months, the frontier of AI video has been an exhausting arms race between Runway, Pika, Kling, Sora, Veo, and Seedance. Each release moved the needle a little. Nobody broke away.

The post-Sora era won’t be won on “does it move?” It will be won on physics, on motion consistency across shots, on whether image and sound actually land together, and on how often real viewers — not curated reels — prefer the result. On the blind-testing dimension that matters most, Happy Horse 1.0 has, at least for the moment, done something the rest of the top tier has not: it has broken away.

In AI video, there are no permanent champions. There are only algorithms that keep winning the blind tests. Right now, one is winning louder than the rest.

The model informationis currently available at happyhorses.io. The full leaderboard methodology can be reviewed on the Artificial Analysis Video Arena. Rankings described in this article reflect the public state of the leaderboard as of April 8, 2026.

—

For the original version of this press release, please visit 24-7PressRelease.com here

Legal Disclaimer: This article was provided by an independent third-party content provider. Kyrion Media makes no warranties or representations in connection with it. All information is provided “as is” without warranty of any kind. This content may not have been reviewed by our editorial staff and is published automatically. The views expressed in this article are those of the author and do not necessarily reflect the views of Kyrion Media. All trademarks are the property of their respective owners. If you are affiliated with this article and would like it removed, please contact retract@kyrionmedia.com.